The Stakes Are Higher Than Ever

AI stopped being an experiment a while ago it’s now baked into everything from hiring filters to hospital scheduling to what shows up in your inbox. We’ve crossed the threshold. AI isn’t on the sidelines of business or society anymore; it’s driving the plays.

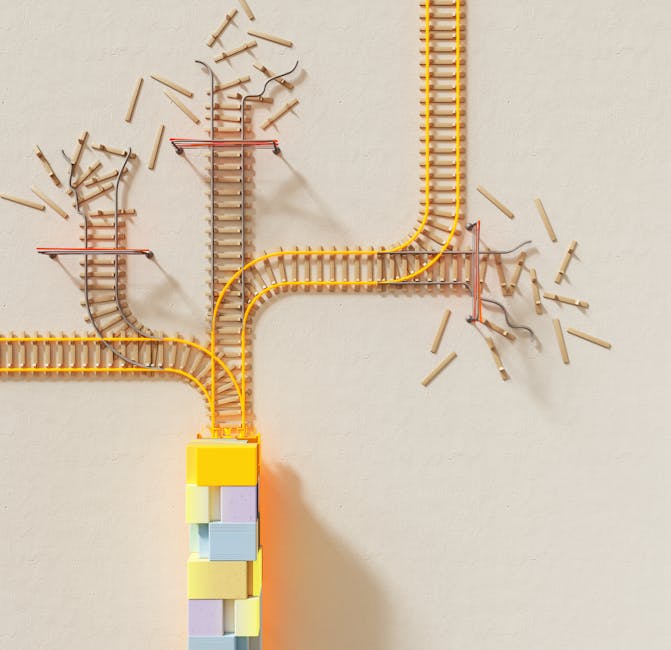

That shift makes 2026 a critical year. Algorithms adjust on the fly now, guided by real time data and self learning systems. A single tweak an update to a recommendation rule, a change in how content is ranked can reshape how millions experience a product or service. These aren’t theoretical risks. They’re live wires.

Which is exactly why ethical deployment is no longer a footnote or a PR checkbox. It’s a boardroom priority. Companies are realizing that trust evaporates fast in a black box world. If your AI screws up if it shows bias, invades privacy, or simply can’t be explained you’re not just facing user backlash. You’re risking lawsuits, regulation, and reputational nosedives.

Businesses that take ethics seriously are winning long term. They’re not only guarding against risk they’re building systems that flex with cultural values and shifting expectations. This isn’t about slowing down. It’s about scaling responsibly, making sure your AI is fit for the world it’s being used in.

Bias Isn’t Just a Bug

AI models don’t invent data they learn from it. That’s problem one. If your training data echoes historical inequality, stereotypes, or one sided perspectives, your AI will recreate that same bias at scale. It doesn’t care whether the data is fair. It just sees patterns.

Look at facial recognition systems. Several high profile studies found these tools misidentified people of color far more often than white individuals because they were trained mostly on lighter skinned faces. Or resume screening AIs that unintentionally penalized women applicants for roles in male dominated industries, simply because the algorithm learned from decades of biased hiring data.

Bias often isn’t intentional, but the harm is real. And once deployed, flawed models can make thousands of decisions in minutes hiring, lending, policing, diagnoses. That’s where impact meets scale.

So how do you fight it? First, know your data. Track where it comes from and who it may leave out. Second, stress test your models. Test outputs across race, gender, income level don’t wait for problems to surface in the wild. Finally, bring in diverse voices. Not just during testing, but from the ground up in development, management, and oversight.

Bias in AI isn’t inevitable. But fixing it takes intention, structure, and the humility to know we don’t always see the flaws our models inherit.

Transparency Builds Trust

The era of black box AI is running out of runway. In 2026, decision makers from product teams to policy makers are expecting more than just accurate outputs. They want to know why a model made a choice, and what data fed into it. Blind trust doesn’t scale. Clear AI decisions do.

Whether it’s a loan rejection, a healthcare recommendation, or a content moderation flag, people deserve to understand the logic behind the AI. And regulators agree. Frameworks like the EU’s AI Act are pushing for explainability not just as a nice to have, but as a nonnegotiable. If you can’t explain your model, you may not be allowed to use it.

The good news? There are tools built for this. SHAP, LIME, and counterfactual explanations help reveal how inputs shaped outputs. More transparent model architectures from interpretable decision trees to attention based deep learning models are gaining favor. These tools don’t just tick compliance boxes they help teams debug, improve, and trust their own systems.

Bottom line: being transparent isn’t a feature. It’s the foundation. Want to see explainability put into practice? Check out Using AI to Personalize User Experiences in Real Time.

Consent, Privacy, and Personal Data

AI’s hunger for data hasn’t slowed. But in 2026, creators and companies can’t afford to treat personal information like free fuel. Global regulations think GDPR in Europe, CCPA in California, and increasingly assertive rules from countries like Brazil and India are tightening the leash. The message is clear: know what you’re collecting, why you’re collecting it, and whether you actually have permission.

Informed consent isn’t just a checkbox anymore. With AI systems making predictions and profiling users behind the scenes, users need to know not just that their data is used, but how it’s shaping their experience. That means plain language, easy opt outs, and more control in the user’s hands.

Still, personalization isn’t the enemy it’s about balance. Ethical operators know that respecting user agency and tailoring content or product recommendations can coexist. The key is transparency and restraint. If a system doesn’t need the data, don’t collect it. When in doubt, earn trust over time instead of scraping everything in sight.

Bottom line: You can still deliver smart, personalized AI. Just don’t do it behind a black curtain.

Accountability and Governance

When AI breaks down spits out misinformation, hallucinates data, or causes real world harm the question isn’t just “what happened?” but “who’s accountable?” The answer isn’t always clean. Developers, product teams, and executives all share responsibility. But brushing it off as the machine’s fault doesn’t cut it anymore.

That’s where internal AI governance frameworks come in. Organizations that treat ethics as more than a checkbox are already building internal protocols to audit models, track decision origins, and flag risk prone outputs. This isn’t about slowing progress it’s about keeping future lawsuits, bad press, and user fallout from burning through your bottom line.

Human oversight still matters. In high stakes verticals like healthcare, finance, and legal advice, saying no to full automation is often the smartest move. A trained eye can catch what a model misses or misinterprets. Build in kill switches. Create escalation paths. Treat human in the loop as an asset, not a bottleneck.

Governance isn’t glamorous. But done right, it gives AI room to grow without becoming a liability.

Building for Inclusion and Accessibility

Designing AI that works for everyone isn’t a luxury it’s a necessity. As AI tools reach more users around the world, the systems behind them need to reflect the full range of human experience. That means building models that account for accessibility needs, regional dialects, and cultural context not just the dominant defaults.

It doesn’t always take a full systems overhaul to make a difference. Small code changes like adding alt text generation, tuning speech recognition for non native accents, or improving contrast in interface layouts can have outsized impacts. These micro adjustments signal that your tech sees everyone, not just the majority.

But this goes deeper than good UI. Building equitable systems starts with intention. Are you testing for performance across disability groups? Are your teams representative of the user base? Inclusion doesn’t happen automatically it has to be designed in from the start.

AI that ignores the margins will fail. AI that listens at the edges will lead.

Sustainable AI Use

The conversation around AI ethics usually centers on people bias, privacy, consent. But there’s another issue quietly growing: the environmental impact.

Training large scale AI models, especially deep neural networks, burns through insane amounts of energy. We’re talking megawatt hours just to fine tune a single system. And while inference (running the model after it’s trained) draws less power, the scale of deployment across millions of devices and users adds up fast.

The good news? Efficiency is starting to matter again. From distillation techniques to sparse architectures, smart model design trims energy use without giving up performance. Leading edge labs and sustainable minded companies are even building carbon tracking into their development cycles. It’s not flashy, but it’s forward thinking and it’s becoming part of what responsible AI looks like.

In 2026, optimizing for both performance and planet isn’t a trade off; it’s part of the job.

Bottom Line: Thoughtful Is Scalable

Ethical AI doesn’t mean tapping the brakes it means knowing where the edges are before you speed up. The smartest companies in 2026 aren’t just rolling out tools fast. They’re building systems with risk baked into the blueprint: what happens if this model overcorrects? How will it age in the wild? Who’s affected if we get it wrong? These aren’t distractions. They’re part of good design.

The myth that ethics slows down innovation is drying up. Leaders in tech and policy are already treating it as a competitive advantage. Frameworks and guidelines aren’t red tape they’re guardrails. More than that: they’re maps. They keep teams aligned, prevent PR disasters, and ensure long term trust with users.

Moving forward, the most effective AI won’t just be powerful it will be human aware by design. Seamless integration, clearer communication, better outcomes. All made easier when you start with intent, not just code.